|

This is important as while you can convert 4 dimensional space to 2 dimensional space, you lose some of the variance (information) when you do this.

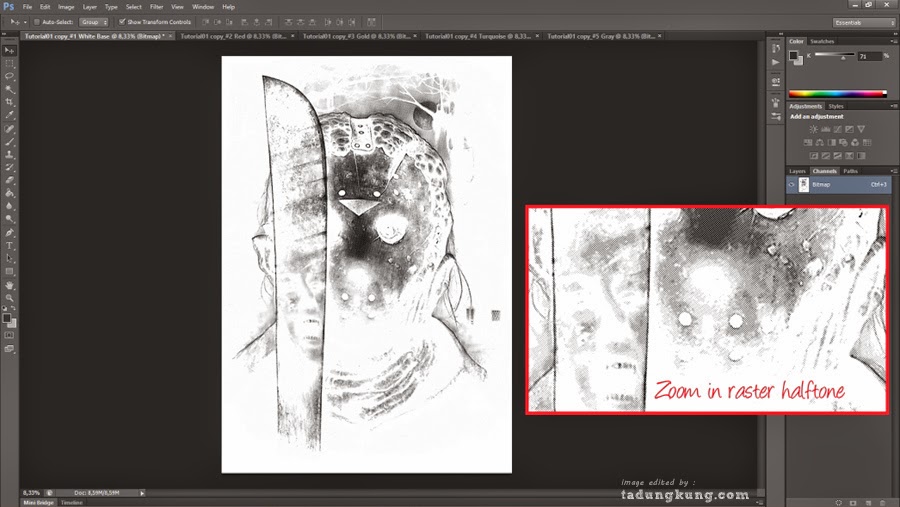

The explained variance tells you how much information (variance) can be attributed to each of the principal components. With that, let’s get started! If you get lost, I recommend opening the video below in a separate tab. The second part uses PCA to speed up a machine learning algorithm (logistic regression) on the MNIST dataset. To understand the value of using PCA for data visualization, the first part of this tutorial post goes over a basic visualization of the IRIS dataset after applying PCA. Another common application of PCA is for data visualization. This is probably the most common application of PCA. If your learning algorithm is too slow because the input dimension is too high, then using PCA to speed it up can be a reasonable choice. A more common way of speeding up a machine learning algorithm is by using Principal Component Analysis (PCA). One of the things learned was that you can speed up the fitting of a machine learning algorithm by changing the optimization algorithm. My last tutorial went over Logistic Regression using Python. Original image (left) with Different Amounts of Variance Retained

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed